Abstract

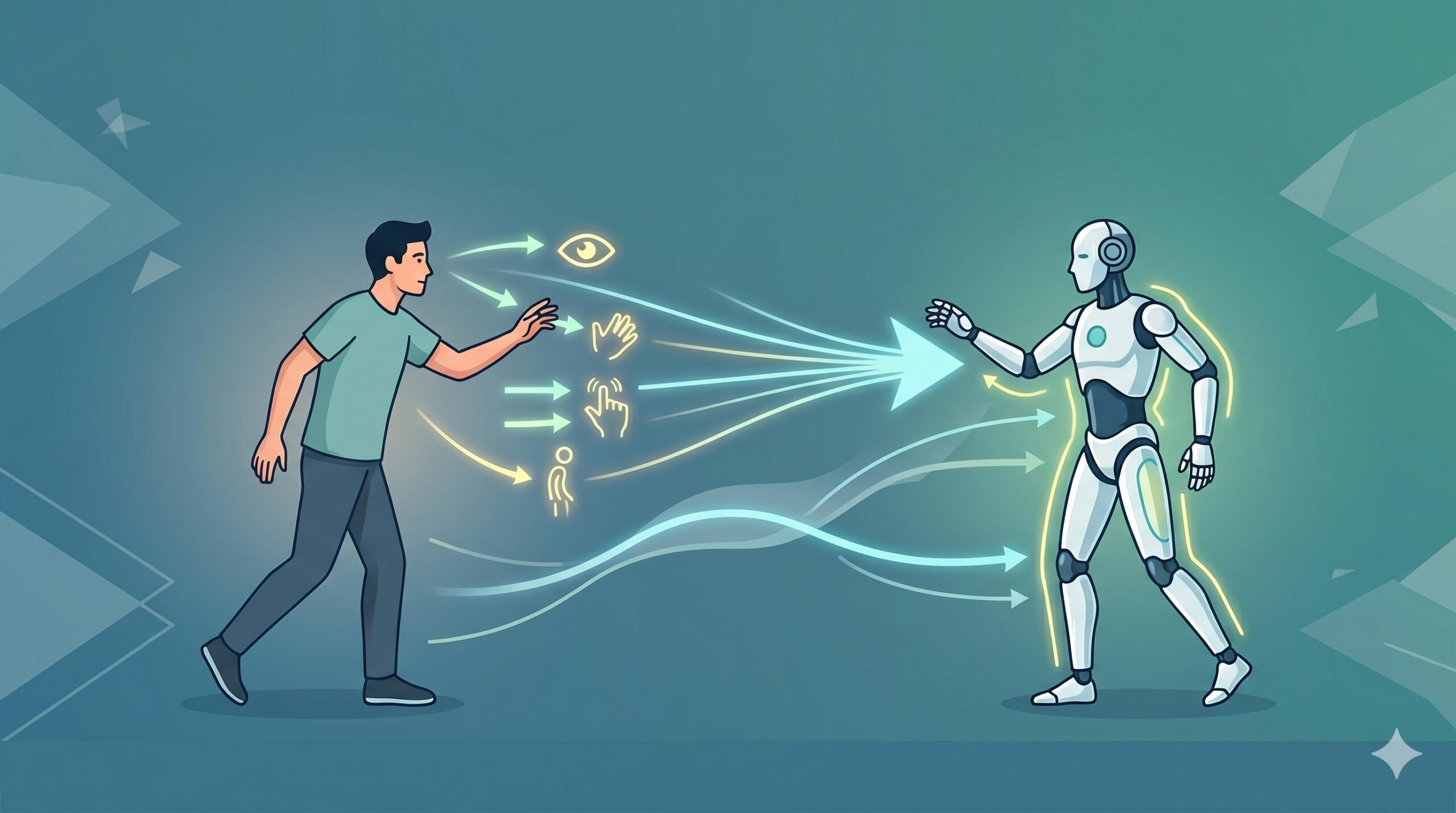

Humanoid robots in shared environments are increasingly expected to interact naturally by interpreting implicit human interaction cues, such as gradual deceleration or torso reorientation that signal emerging engagement. However, existing humanoid systems primarily rely on explicit recognition (e.g., only start or stop after the interactant’s initial or final body pose) and lack mechanisms to continuously infer interaction intent as the human’s whole body moves, which may reduce coordination fluency and interaction quality. In this work-in-progress (WIP) paper, we propose a whole-body implicit interaction framework that uses early motion cues from interactants, such as motion deceleration and torso reorientation, to infer interaction intent and modulate humanoid whole-body responses. We formulate the problem as a stability-constrained whole-body control process that preserves zero-moment point (ZMP) feasibility and center-of-mass (CoM) tracking during transition. The proposed approach will be evaluated in common social interaction scenarios such as handshaking, joint sitting, and object-oriented gestures under both reactive and anticipatory conditions, where transitions are triggered either by explicit engagement or by continuously inferred motion cues. By grounding intent at the locomotion level, the framework is intended to support, prior to explicit human action, earlier arm extension and coordinated whole-body posture adjustment. We hypothesize that this anticipatory mechanism can reduce efficiency, e.g., interaction latency, and improve coordination fluency while maintaining dynamic stability during close-proximity interaction.